#Svm hyperplan code

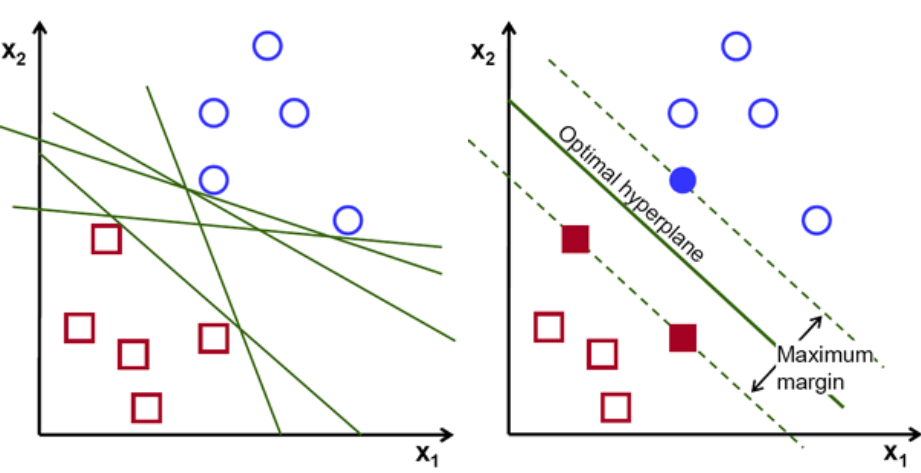

The following is code written for training, predicting and finding accuracy for SVM in Python: import numpy as npĭef _init_ (self, inputDim, outputDim): Though there will be outliers that sway the line in a certain direction, a C value that is small enough will enforce regularization throughout. Large Margin ClassifierĪn SVM will find the line or hyperplane that splits the data with the largest margin possible. Each member of the training set can be considered a landmark, and a kernel is the similarity function that measures how close an input is to said landmarks. Instead of adding more polynomial features, it's better to add landmarks to test the proximity of other datapoints against. Polynomial features tend to be computationally expensive, and may increase runtime with large datasets. For SVMs, cost is determined by kernel (similarity) functions. In the equation, the functions cost1 and cost0 refer to the cost for an example where y=1 and the cost for an example where y=0. By minimizing the value of J(theta), we can ensure that the SVM is as accurate as possible. The Cost Function is used to train the SVM. This machine learning algorithm is used for classification problems and is part of the subset of supervised learning algorithms. In other words, given labeled training data (supervised learning), the algorithm outputs an optimal hyperplane which categorizes new examples.Īn SVM cost function seeks to approximate the logistic function with a piecewise linear. is a discriminative classifier formally defined by a separating hyperplane. According to OpenCV's "Introduction to Support Vector Machines", a Support Vector Machine (SVM).